News in Short:

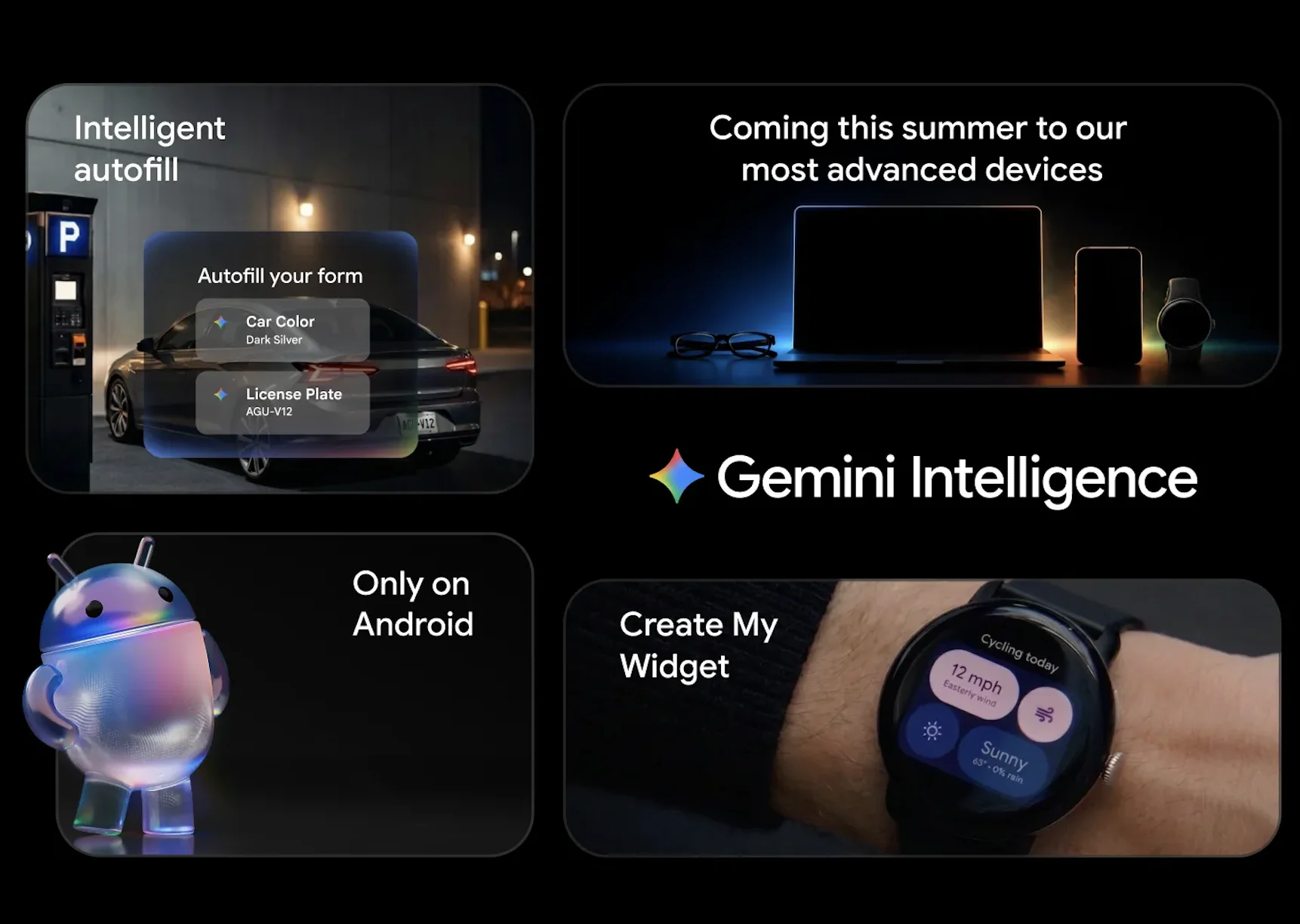

- Google has announced Gemini Intelligence on Android with proactive AI-powered features across phones and connected devices.

- The update introduces app automation, smarter Chrome browsing, intelligent Autofill, and AI-powered voice writing.

- Gemini Intelligence will first roll out on Samsung Galaxy S26 and Google Pixel 10 devices this summer.

- Google says the experience will later expand to Android watches, cars, glasses, and laptops.

Google is pushing Android deeper into the AI era with the launch of Gemini Intelligence on Android. The company says the new system combines Gemini AI with premium Android hardware to create a more proactive and personalized experience.

Instead of acting like a traditional chatbot, Gemini Intelligence is designed to handle tasks, understand screen context, automate actions across apps, and assist users throughout the day. The rollout starts this summer on Samsung Galaxy S26 and Google Pixel 10 devices before expanding across the broader Android ecosystem later this year.

What Is Gemini Intelligence on Android?

Gemini Intelligence on Android is Google’s new AI-powered experience that blends Gemini models directly into Android workflows. The system aims to reduce manual interactions by letting AI complete tasks, understand intent, and automate actions in real time.

Google says the experience is built around privacy and user control. Gemini only performs actions when users request them, and it stops once tasks are completed.

This marks a major shift in how Android devices operate. Instead of waiting for commands one step at a time, Gemini Intelligence is being positioned as an assistant that can proactively connect apps, understand context, and manage workflows behind the scenes.

How Will Gemini Intelligence Automate Android Tasks?

One of the biggest changes coming with Gemini Intelligence on Android is multi-step app automation. Google says Gemini can navigate across apps and complete complex actions that usually require several manual steps.

For example, Gemini can help users reserve a front-row bike for a fitness class or locate a class syllabus in Gmail before adding required books to a shopping cart.

Google also highlighted how Gemini can work using screen context and images. Users can long-press the power button while viewing a grocery list and ask Gemini to create a shopping cart instantly. Similarly, someone can snap a photo of a travel brochure and ask Gemini to find a matching group tour online.

This creates a more agent-like Android experience where the AI understands what is visible on the screen and acts accordingly.

Gemini in Chrome Wants To Change Web Browsing

Google is also integrating Gemini directly into Chrome on Android. Starting in late June, Gemini in Chrome will help users summarize information, compare content across websites, and assist with online research.

The company is additionally introducing what it calls “Chrome auto browse.” This feature can automate repetitive online actions like booking appointments or reserving parking spaces.

The move signals Google’s growing focus on turning browsers into AI-assisted workspaces instead of passive search tools.

Can Android Finally Fix Mobile Form Filling?

Google believes AI can also solve one of the most frustrating smartphone experiences: filling out forms on small screens.

Gemini Intelligence upgrades Autofill with Google by connecting it with Gemini’s Personal Intelligence system. This allows Android to pull relevant information from connected apps and automatically complete more detailed form fields.

Google stressed that the feature is opt-in. Users must manually enable the Gemini connection, and they can disable it anytime through settings.

That privacy-focused approach may become important as AI systems gain deeper access to personal data and app activity.

What Is Rambler and Why Does It Matter?

Google is also introducing a new feature called Rambler inside Gboard. The feature focuses on transforming natural speech into cleaner, more polished writing.

Unlike standard voice typing, Rambler understands pauses, repeated phrases, filler words, and corrections during speech. It then restructures spoken thoughts into concise written messages.

Google says Rambler supports multilingual conversations as well. Users can switch between languages like English and Hindi in the same sentence while the AI maintains context and tone.

The company also clarified that Rambler only uses audio for real-time transcription and does not store recordings.

Android Widgets Are Becoming AI-Powered

Another major addition is Create My Widget, which introduces generative AI-powered widgets on Android.

Users can describe the kind of widget they want using natural language, and Gemini will generate a custom dashboard. Google showcased examples like meal-prep recipe widgets and personalized weather widgets focused only on cycling conditions like wind speed and rain.

These widgets will also work on Wear OS devices, expanding Gemini Intelligence beyond smartphones.

A New Android Design Built Around AI

Gemini Intelligence also arrives with an updated visual design language based on Material 3 Expressive. Google says the interface uses purposeful animations to reduce distractions and improve focus.

The redesign appears to support Google’s broader vision of AI-first computing, where interfaces become more adaptive and context-aware instead of static.

Why Gemini Intelligence on Android Matters

Google’s latest Android push shows how smartphone AI is rapidly evolving beyond chat interfaces. Gemini Intelligence on Android introduces a system where AI can understand screens, automate actions, manage workflows, and personalize interfaces across multiple devices.

With Samsung Galaxy S26 and Pixel 10 devices leading the rollout this summer, Android users may soon experience phones that act less like tools and more like active digital assistants. The larger test, however, will be whether users trust Gemini Intelligence on Android enough to let AI handle everyday tasks in real-world situations.

For discoverability and AI-answer visibility, Google’s biggest move here may not be a single feature. It may be the shift toward making Android itself feel proactive, contextual, and continuously aware of what users need next.