News in Short:

- Google is developing an AI-powered mouse pointer that understands what users point at and why it matters.

- The new system combines pointing, voice commands, and Gemini AI to reduce the need for long prompts.

- Google plans to bring these capabilities into Chrome and its upcoming Googlebook laptop experience.

- The company says the goal is to make AI work across apps instead of forcing users into separate AI windows.

The humble mouse pointer may finally be getting its biggest upgrade in decades. Google has revealed an experimental AI-powered mouse pointer that can understand on-screen context, voice commands, and user intent through Gemini AI. The company says this could make interacting with computers faster, more natural, and less dependent on typing prompts.

The announcement comes as AI companies race to make interfaces smarter and more human-like. However, Google’s latest concept focuses on something people already use every day: the cursor.

Why Is Google Reinventing the Mouse Pointer?

For more than 50 years, the mouse pointer has mostly worked the same way. It clicks, drags, and selects. Yet modern AI systems still force users to leave their workflow, open a chatbot window, and manually explain context.

Google believes that process feels outdated.

According to the company, users currently need to “drag their world into AI.” Its new vision flips that approach. Instead, AI should understand what users already see on-screen and respond naturally inside existing workflows.

In practical terms, that means a user could point at a building image and simply say, “Show me directions.” The AI system would automatically understand the building, open maps, and provide navigation without needing extra prompts.

That shift could dramatically reduce friction in daily computing.

How Does the AI Mouse Pointer Work?

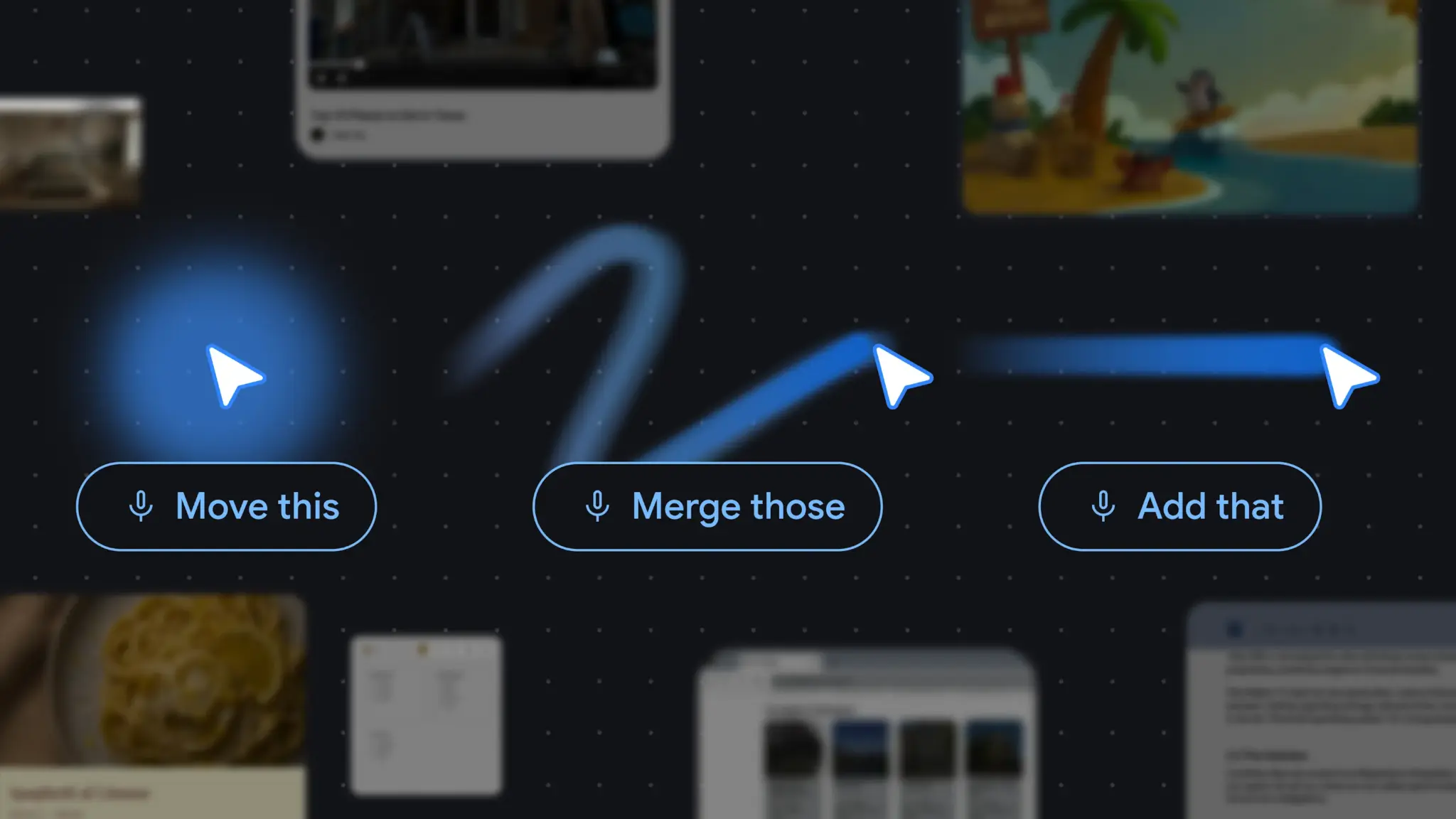

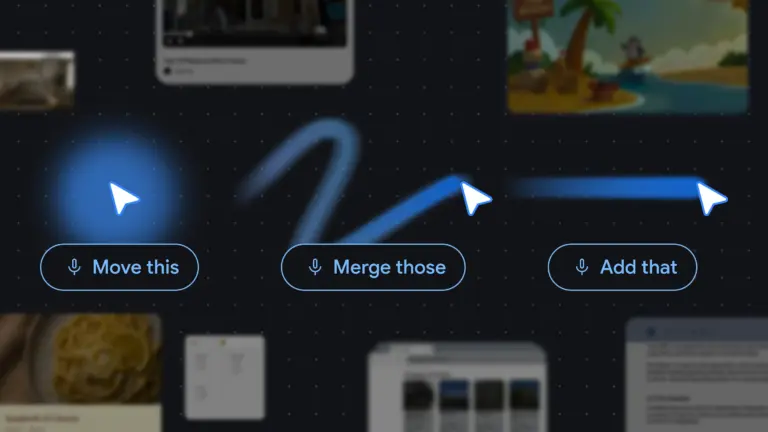

Google’s experimental system combines three layers of interaction. It uses the mouse pointer for location, Gemini AI for context understanding, and voice for intent.

The company says the AI can identify specific text, code blocks, objects, tables, or images directly from the screen. As a result, users no longer need to describe everything manually.

For example, someone could hover over a statistics table and ask for a pie chart version. A user reading a recipe could highlight ingredients and request doubled quantities instantly. Similarly, a PDF could be summarized into bullet points and pasted directly into an email draft.

Google describes this as moving from “prompt-heavy” AI interactions toward gesture-based communication.

That idea mirrors how humans naturally communicate with each other. People often say phrases like “move this,” “fix that,” or “what does this mean?” while pointing at something. Google wants computers to finally understand that shorthand.

What Makes This Different From Existing AI Tools?

Most current AI assistants operate inside isolated apps or side panels. Users must constantly switch tabs, upload files, and explain context repeatedly.

Google’s AI mouse pointer aims to eliminate that disconnect.

The company says its system can continuously understand visual and semantic context around the pointer. That means the cursor becomes more than a navigation tool. It turns into an intelligent layer sitting across apps and websites.

Another important change involves “actionable entities.” Traditionally, computers only tracked cursor position. Now, AI models can identify what the pointer is actually hovering over.

That opens new possibilities.

A paused travel video frame could instantly generate booking links for nearby restaurants. A handwritten note could transform into an interactive task list. Product images on shopping sites could be compared through simple voice commands.

Instead of typing detailed prompts, users interact naturally with content already visible on-screen.

Where Will Google Use This Technology First?

Google confirmed that these interaction principles are already being integrated into several products.

The first major rollout is happening inside Chrome. Users will soon be able to point at webpage elements and ask Gemini questions directly about selected content.

For example, users could compare products without copying information into a chatbot. They could also visualize furniture inside a room image while browsing online.

Google also announced that “Magic Pointer” will arrive on its upcoming Googlebook laptops. The company says the feature will bring Gemini-powered interactions directly into the operating system experience.

Additionally, Google plans to continue testing future concepts through Google Labs and experimental projects like Disco.

Why Does This Matter Beyond Chrome?

The bigger story is not just about Chrome or laptops. It is about how AI interfaces may evolve over the next few years.

Today’s AI assistants still depend heavily on typing, switching windows, and manual prompting. Google’s direction suggests future systems may rely more on gestures, voice, and environmental understanding instead.

That could make AI tools easier for mainstream users who struggle with prompt writing.

At the same time, it may change how software itself gets designed. Apps may no longer need separate AI buttons everywhere if the pointer becomes context-aware by default.

The AI mouse pointer also signals a broader industry shift toward “ambient AI,” where intelligence quietly works in the background without interrupting workflows.

Google’s latest experiment shows that even one of computing’s oldest tools can become part of the AI race. And if the company succeeds, the mouse pointer may evolve from a simple cursor into a real-time AI assistant that understands both context and intent.