Key Highlights:

- The company claims views and watch time for original content doubled in late 2025 after earlier crackdowns.

- Facebook is testing new tools that make it easier for creators to report impersonation accounts.

- Meta says it removed 20 million impersonator accounts in 2025.

- Updated guidelines now define what Facebook considers “original content.”

Facebook is introducing new tools designed to make it easier for creators to report impersonators and protect their content. The update is part of Meta’s broader effort to reduce spam, duplicate posts, and AI-generated “slop” that has increasingly flooded the platform.

The company says the tools will simplify the reporting process and help creators flag duplicate content from a central dashboard. The move comes as Facebook tries to strengthen its reputation as a platform where original creators can still grow and monetize their work.

The announcement follows months of criticism that the platform had become overwhelmed by low-quality AI-generated posts and recycled content.

Why is Facebook focusing on impersonation and “AI slop”?

Meta’s latest update comes after growing complaints from users and creators about the spread of spammy content across Facebook feeds.

In recent months, critics have described parts of the platform as an “AI slop hellscape,” where low-effort posts, recycled media, and AI-generated content often outperform original creators.

For creators, the issue goes beyond visibility. Many depend on Facebook’s monetization programs, and duplicate or impersonated content can directly impact their earnings.

Meta says this is why the company has been pushing changes to prioritize original posts and reduce unoriginal uploads.

If creators feel their work is repeatedly copied or overshadowed by spam, they may shift their attention to other platforms. That could weaken Facebook’s position as a creator destination.

What are the new Facebook impersonation reporting tools?

The latest update focuses on improving how creators report impersonation and duplicated content.

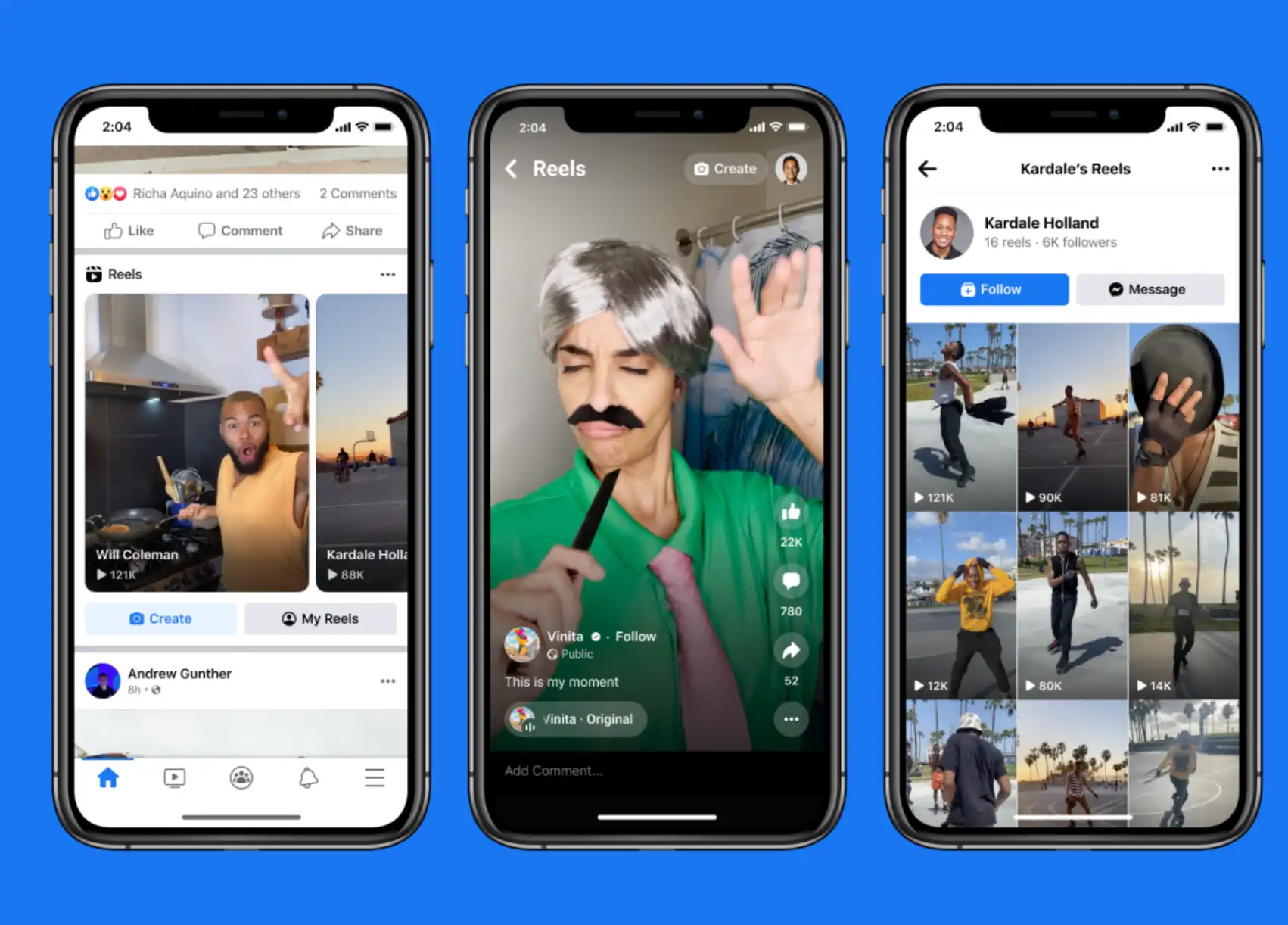

Facebook already runs systems that detect when the same video or reel appears multiple times across the platform. However, the reporting process previously required several steps and often felt fragmented. With the new testing phase, creators will soon be able to submit reports from a single centralized interface.

The system works like this:

Creators will receive alerts if their reels appear elsewhere on Facebook after being published. From a central dashboard, they can review those posts and flag them if they believe they were uploaded by impersonators.

The update aims to simplify reporting so creators can take action faster. However, Meta clarified that the current tool primarily focuses on matching duplicate content. It does not yet detect unauthorized use of a creator’s face, voice, or likeness. That remains a major challenge across social media platforms as AI tools make it easier to replicate identities.

Meta’s earlier crackdown shows early results

Meta says its earlier efforts to reduce unoriginal content have already produced measurable changes. According to the company, the number of views and time spent watching original content on Facebook roughly doubled during the second half of 2025 compared with the same period in 2024.

The company also reported progress against impersonation accounts. Meta removed around 20 million impersonator accounts in 2025. It also says reports targeting large creators dropped by about 33 percent during the same period.

These numbers suggest that the company’s earlier policies may have already reduced some of the most visible cases of impersonation. Still, critics argue that duplicate posts and AI-generated content remain widespread.

What counts as “original content” on Facebook now?

Alongside the new reporting tools, Meta is updating Facebook’s creator guidelines. The company now provides clearer definitions of what qualifies as original content.

According to the new guidelines, original content includes material that is filmed or produced directly by a creator. Reels that remix other posts but add analysis, commentary, discussion, or new information can also qualify.

In contrast, content with only minor edits will be considered unoriginal. This includes reposting someone else’s work or making small changes such as adding captions, borders, or visual overlays without meaningful transformation.

Posts that fall into this category may be deprioritized in Facebook’s recommendation systems. The goal is to make it harder for accounts to gain reach by recycling someone else’s work.

Facebook is not alone in tackling AI-generated impersonation

The growing use of AI tools has created new challenges for social media platforms. Deepfakes, voice cloning, and automated content generation make it easier to impersonate creators or replicate their work.

Facebook is not the only company responding. YouTube recently announced that it will expand its AI deepfake detection tools to cover politicians, public figures, and journalists.

Across the tech industry, companies are racing to build systems that can detect manipulated media while still allowing creators to remix and reinterpret existing content.

Balancing those goals has become increasingly complex.

What this means for creators and the future of Facebook

The latest updates show that Facebook is trying to reinforce its role as a platform where original creators can still thrive. By making impersonation reporting easier and clarifying what counts as original work, Meta hopes to reduce the spread of copied posts and low-value uploads.

The success of these efforts may determine whether creators continue to view Facebook as a viable publishing platform. If duplicate content and AI-generated spam continue to dominate feeds, creators could shift their attention to competing platforms.

For now, Meta’s latest updates signal a renewed effort to restore trust and visibility for original creators on Facebook.