Key Highlights:

- MAI-Transcribe-1 supports 25 languages and runs 2.5× faster than Azure Fast.

- MAI-Voice-1 generates 60 seconds of audio in one second with custom voice support.

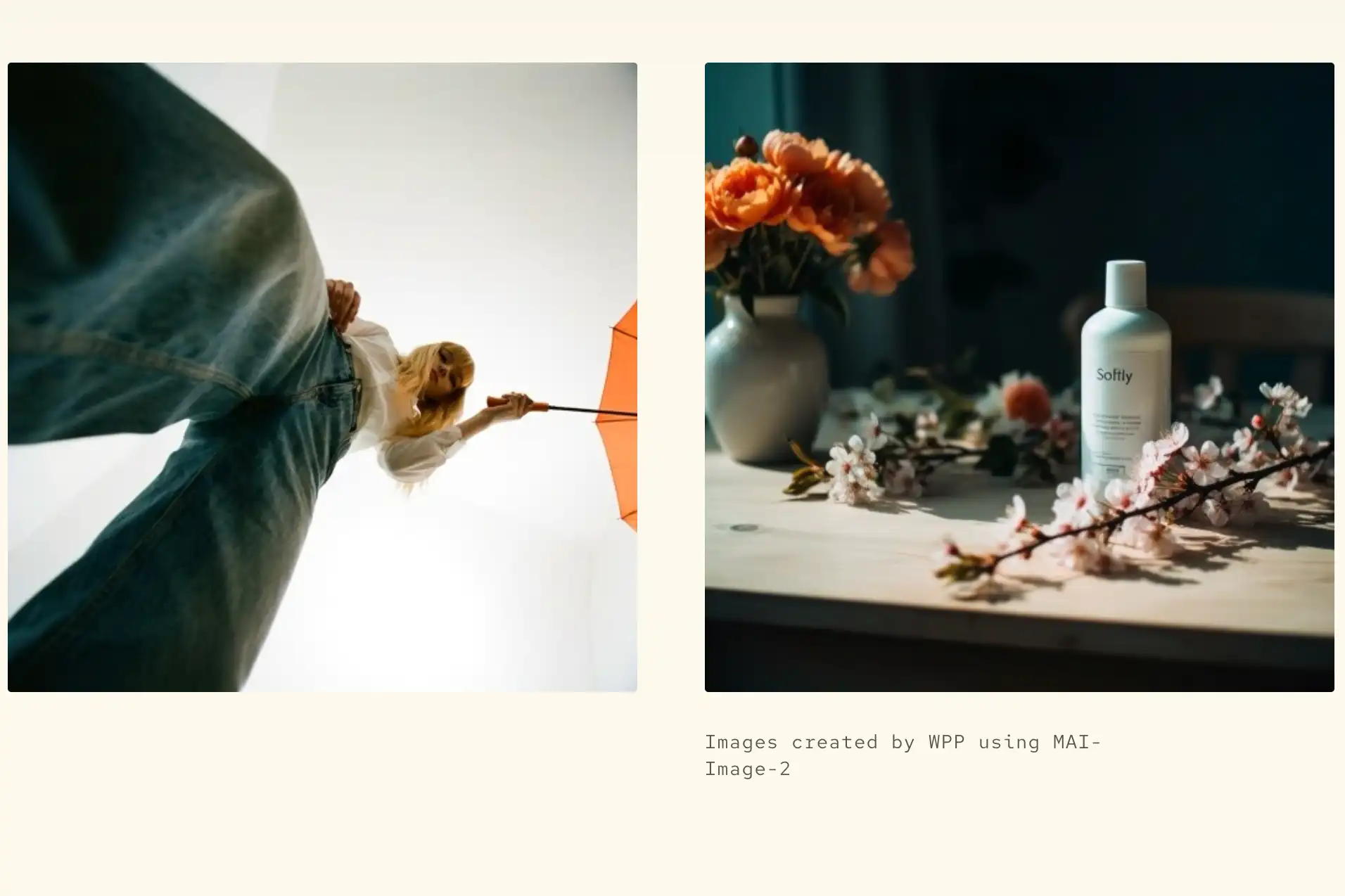

- MAI-Image-2 expands Microsoft’s push into multimodal AI development tools.

Microsoft has introduced three new multimodal AI foundation models that generate text, speech, and visual content. The move signals a deeper shift in Microsoft’s strategy as it builds its own AI stack while continuing its partnership with OpenAI.

The models—MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2—are designed for developers and enterprises. They are now available through Microsoft Foundry and MAI Playground testing tools. Together, they expand Microsoft’s role in the fast-moving global AI infrastructure race.

What are the three new Microsoft MAI models?

Microsoft’s MAI Superintelligence research team developed the new models. The team operates under the leadership of Mustafa Suleyman and focuses on building practical multimodal systems.

Each model targets a different core AI capability.

MAI-Transcribe-1 converts speech into text across 25 languages. Microsoft says it operates 2.5 times faster than its Azure Fast transcription offering. This makes it suitable for real-time applications such as meetings, customer support, and media workflows.

MAI-Voice-1 generates speech output rapidly. It can produce 60 seconds of audio in just one second. It also allows developers to create custom voice styles, opening opportunities in assistants, narration tools, and accessibility services.

MAI-Image-2 generates video content from text prompts. The model first appeared inside MAI Playground in March. It is now part of Microsoft Foundry’s broader developer ecosystem.

Together, these models strengthen Microsoft’s multimodal AI capabilities across text, audio, and visual pipelines.

Why is Microsoft building its own AI models now?

Microsoft continues to invest heavily in AI infrastructure despite its long-term collaboration with OpenAI. The company has already committed more than $13 billion to the partnership. However, the latest releases show a parallel strategy taking shape.

Instead of depending on external models alone, Microsoft is building its own foundation layer. This allows tighter integration across enterprise software, developer tools, and cloud platforms.

According to Suleyman, the company’s goal is to create what he described as “Humanist AI.” The approach focuses on models trained for real-world communication and practical workflows rather than experimental benchmarks.

This shift also reflects broader competition across the AI sector. Companies including Google continue expanding multimodal capabilities across their own ecosystems.

Microsoft targets lower-cost AI deployment for developers

Pricing is emerging as a major differentiator in the foundation model market. Microsoft says the new MAI models are positioned as lower-cost alternatives compared with competing systems.

MAI-Transcribe-1 starts at $0.36 per hour.

MAI-Voice-1 starts at $22 per one million characters.

MAI-Image-2 costs $5 per one million tokens for text input and $33 per one million tokens for image output.

Lower pricing could make the models attractive for startups and enterprise teams building production workflows at scale.

Cost efficiency is increasingly important as organizations integrate AI into daily operations such as transcription automation, voice interfaces, and video creation pipelines.

Where are the MAI models available right now?

Microsoft has released the models across two primary environments.

Microsoft Foundry provides enterprise-ready deployment tools. Developers can integrate the models into production systems through APIs and cloud workflows.

MAI Playground serves as a testing platform. It allows experimentation with model capabilities before full deployment.

MAI-Image-2 launched earlier in Playground during March. Now, all three models are accessible through Foundry, while transcription and voice generation tools remain available inside Playground as well.

This dual rollout strategy supports both experimentation and enterprise adoption.

How does this fit into Microsoft’s long-term AI strategy?

The MAI releases reflect Microsoft’s evolving position in the global foundation model landscape. Instead of relying only on partnerships, the company is building internal research pipelines capable of producing multimodal systems.

At the same time, Microsoft continues working closely with OpenAI across products such as Copilot and Azure AI services. According to Suleyman, recent partnership adjustments created more room for independent superintelligence research.

The strategy mirrors Microsoft’s approach to chips. The company designs some hardware internally while still sourcing from external partners.

Similarly, its AI roadmap now combines collaboration with in-house innovation.

As competition accelerates across the multimodal AI ecosystem, Microsoft’s latest releases signal a stronger push toward platform independence while expanding developer access to scalable tools. With MAI-Transcribe-1, MAI-Voice-1, and MAI-Image-2 entering production channels, Microsoft is positioning itself more directly inside the foundation model race.